Your New Job Is to Onboard AI Agents: How AI Native Companies Actually Operate

Inside Linear's agent-first workflows, Ramp's 4 levels of AI proficiency, and Factory's playbook for turning expert knowledge into AI skills

Dear subscribers,

I’ve spent the past few months interviewing leaders at AI-native companies. I’m now convinced that:

Onboarding and managing AI agents IS the job, no matter what your function is.

In this free deep dive, I want to share how three AI-native companies —Linear, Ramp, and Factory — put this principle into practice. Here are some quotes from each:

Nan Yu (Head of Product, Linear): “You’ll have AI team members that you can assign tasks to and talk to just like how you talk to people.”

Geoff Charles (CPO, Ramp): “If you’re not using Claude Code, no matter what your role is, you’re probably underperforming.”

Eno Reyes (CTO, Factory): “We codified product management, frontend UI, data analysis, and more into reusable skills that any employee can invoke.”

Read on for an inside look at how each AI-native company operates.

Linear: Making AI agents first-class teammates

Linear’s approach to agents is shaped by their product. Nan (Linear’s Head of Product) believes that:

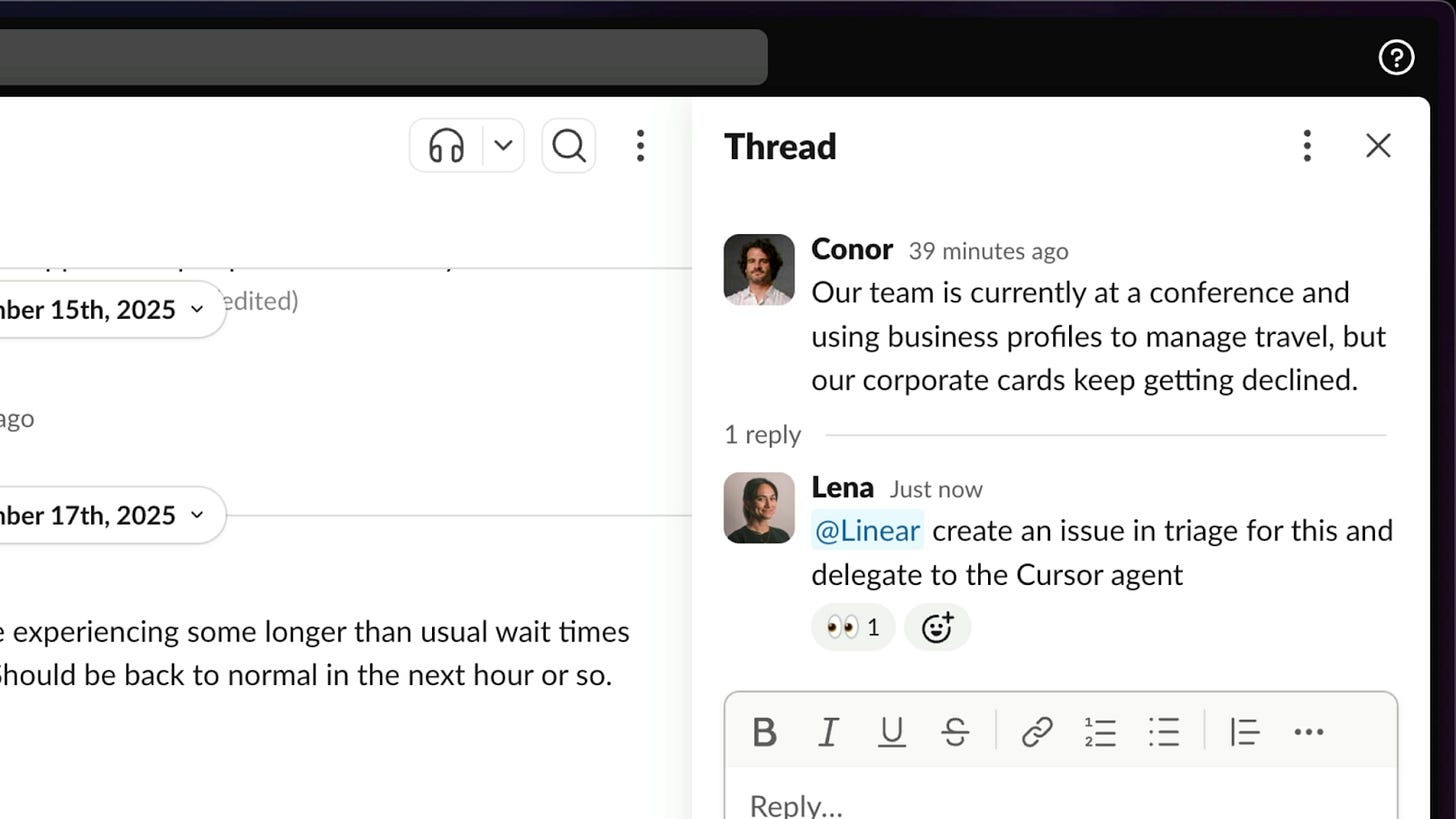

Agents should be first-class employees. You should be able to add them to projects, assign them to issues, and mention in comments.

However, Nan also believes that the human always stays accountable for the outcome. Here’s how Linear builds products with agents in every step:

Understand the problem. Agents read and summarize every customer conversation from Intercom, Zendesk, and Gong. They auto-create issues, de-dupe them against the backlog, and assign them to the right team.

Identify the solution. Since agents have access to customer conversations, they can help you iterate on a spec by pulling data-backed insights from multiple channels.

Make a plan. Agents can break your spec into concrete tickets and route them to the right teams automatically. At Linear, agents now create the majority of tickets. The human’s job is to review their work and adjust context over time.

Execute. Bugs and small features get assigned directly to agents like Codex and Cursor. For complex features, engineers launch Claude Code and use Linear MCP to pull in full issue context.

From Nan:

It feels like the scope of what agents can handle is expanding every quarter. New models and harnesses are pushing the boundary from simple fixes to increasingly complex projects.

Want to build with agents like Linear? Here are 4 practical steps that Nan shared on what your team can do today:

Every developer should default to a leading agentic coding tool. This is the easiest first step. Provide the official tool (Cursor, Claude Code, or Codex) and manage it so you can see utilization.

Supplement with an async cloud coding agent. Async background agents can one-shot most small changes and bug fixes. Cursor and Devin have good offerings here.

Insist that designers and PMs work directly on the codebase. Agents like Claude open a low-friction path for PMs and designers to make changes directly in the codebase. Everyone should strive to be a builder.

PMs and marketers should default to an AI interface. These functions should be doing 80-100% of their work through a chat interface — whether that’s Claude, ChatGPT, Notion AI, or something similar.

Nan sees a future where humans will collaborate with agents at the spec level — defining what needs to be built and why — and then passing things off to agents to handle everything downstream.

Ramp: Measuring AI proficiency in 4 levels

If Linear shows how to make agents a first-class part of your team, then Ramp shows how to get your entire company to adopt them.

In 2025, Ramp shipped 500+ features, reached $1B revenue, and did it all with 25 PMs.

They did this by requiring every single function (eng, product, design, sales, marketing, legal, finance) to onboard and work with agents.

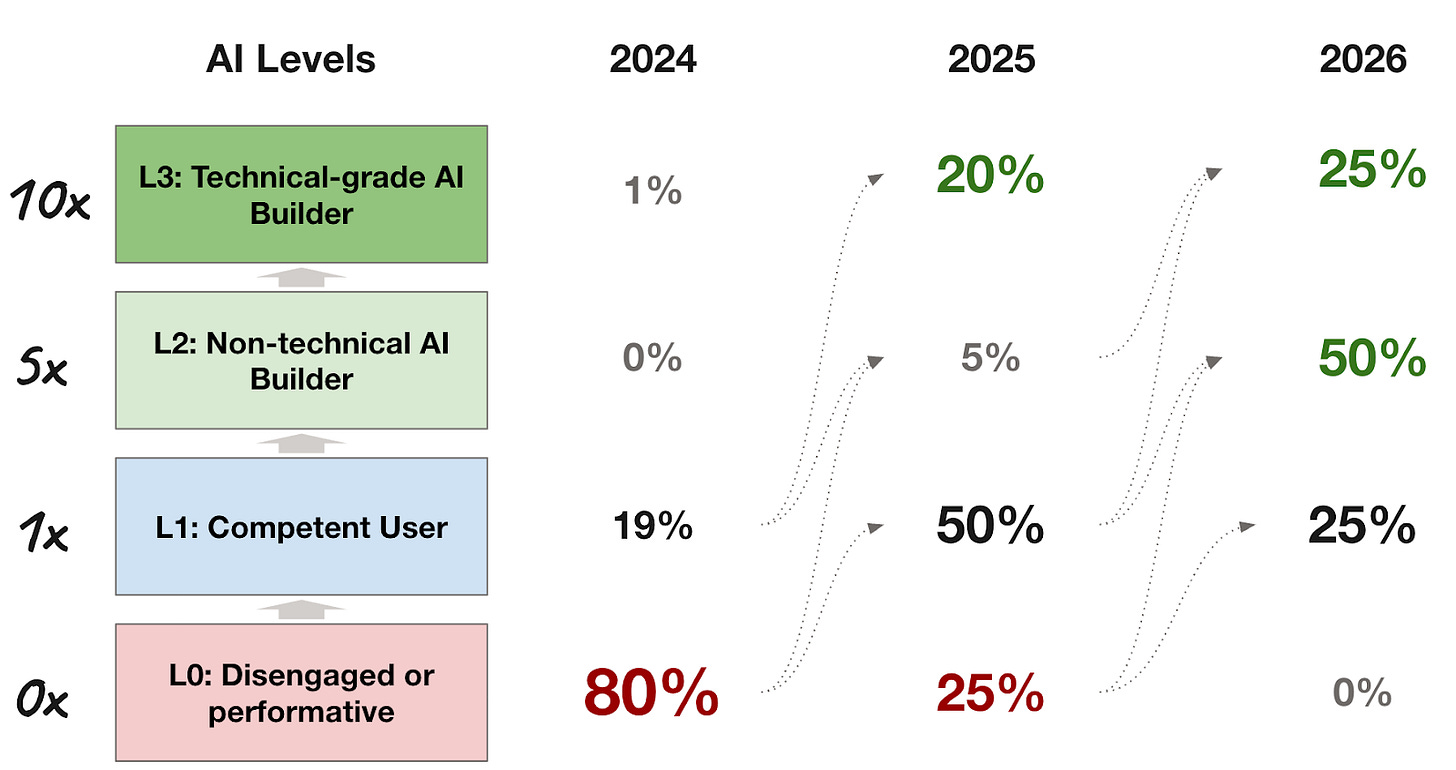

Geoff (CPO Ramp) shared a framework for evaluating AI proficiency for every employee that I find extremely practical:

L0: Sometimes uses ChatGPT. These people will most likely not be at the company long-term. If you’re not a self-starter with a growth mindset toward AI tools, Geoff says it’s going to be very hard to train you to excel.

L1: Uses and tweaks GPTs, projects, and internal AI tools. These people are experimenting with AI but haven’t automated real work yet.

L2: Builds an app that automates part of their job. These people can commit code or give meaningful feedback on other people’s work using AI tools.

L3: Systems builders. These people are building the AI infrastructure and skills that accelerate everyone else on the team.

The company’s goal is to push everyone up the ladder. L0s self-select out. L1s become L2s. L2s become L3s. And L3s influence the rest of the organization.

Geoff also shared 5 steps that any company can take to become AI-native:

Remove all friction. Give access to popular AI tools with no constraints on tokens or budgets and create an internal repository of AI skills anyone can pull from. If the setup is hard, most people won’t adopt.

Make adoption visible. Create public Slack channels where people can share what they built. At all-hands, showcase non-engineers doing impressive things, like finance building a treasury system or marketing automating website creation.

Provide hands-on support. Host office hours that anyone can join to build AI skills and workflows. Have designated AI experts whose entire job is to evangelize, get people set up, and help them reach the “aha moment.”

Track usage and intervene. Ramp tracks token consumption across AI tools per employee. Leadership shares this data to create natural accountability and step in when someone’s usage is low.

Make it a hiring requirement. PM interviews now include a dedicated session where you need to build a working product and then explain why you built it and how it works.

Geoff summed up his leadership philosophy for every role at Ramp in one line:

Your job is to automate your job.

From Geoff: “If I tell my team 10 times that the CTA needs to be above the fold, the fix isn’t saying it the 11th time. It’s encoding that feedback into an automated design crit process or AI skill so that it never happens again.”

Factory: AI native from day one

If Linear and Ramp show how companies adopt AI agents, Factory shows what happens when you build around them from day one.

Factory is a 55-person AI software development company valued at $300M that’s structured around AI from the ground up. Here’s what makes them different:

Hire product engineers

Factory doesn’t hire PMs and engineers separately. Instead, they hire product engineers who manage and work with AI agents. A typical day looks like:

Examine traces from agent runs to see where the system made poor decisions.

Write fixes not as code, but as governance (e.g., an update to a skill, a new lint rule, or a refined automation)

Review only the PRs that agents flag as high-risk (agents take care of the rest).

Suggest new ideas and debate prioritization with colleagues and AI.

This work isn’t easy and requires deeper expertise, but the leverage is enormous.

Make your codebase agent-ready

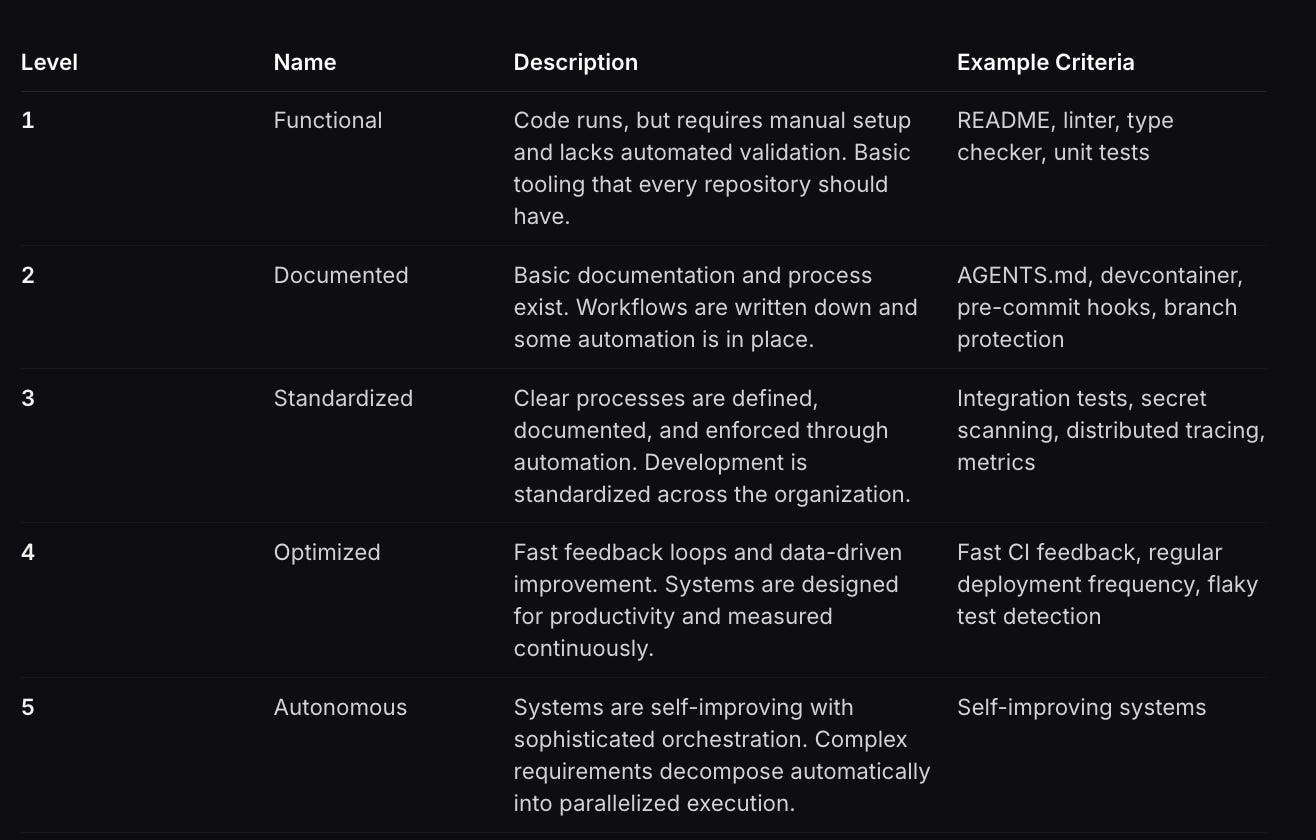

Agents need a codebase they can actually work in to be effective. Factory scores codebases across five maturity levels, and Level 3 ("Standardized") is where most teams need to aim first.

Encode expertise into reusable skills

Once your codebase is agent-ready, the next step is giving agents the knowledge to make good decisions through skills (basically just text markdown files).

Factory uses skills to encode expert and company knowledge into something that any agent or employee can use. Here’s a list of skills that Factory uses internally and links to their markdown files for you to copy and modify:

Product management skill. Product principles, an 11-star experience framework (borrowed from Airbnb’s Brian Chesky), PRD template, scoring rubric, and language guidance all in one markdown file.

Frontend UI integration skill. Instructs Droid on how to build features using Factory’s design system, routing conventions, and testing standards.

AI data analyst skill. Run exploratory analysis, build visualizations, and generate statistical reports using the full Python ecosystem.

Internal tools skill. Build admin panels, support consoles, and operational dashboards with proper access controls and audit logging baked in.

Vibe coding skill. Rapidly prototype new web apps from scratch with modern frameworks.

If you can encode what your best people know into skills, you don’t need to hire specialists for every function.

6 steps to put all this into practice

To recap:

Onboarding and managing agents is becoming the core job for every function.

Here are six things you can put into practice right now:

From Linear:

Default every developer to an agentic coding tool like Cursor, Claude Code, or Codex.

Get PMs and designers into the codebase. Let them submit PRs and ship code using agents. Stop routing every small change through an engineer.

From Ramp:

Measure AI proficiency across your team. Ramp’s 4 levels framework gives you a shared vocabulary for where people are and where they need to go.

Track AI usage and make it part of performance expectations. You can’t improve what you don’t measure, and incentives matter.

From Factory:

Score your codebase for agent readiness. Use Factory’s agent readiness levels to understand whether your codebase is ready.

Codify your team’s expertise into reusable skills. Encode what your best people know into skill files and make it easy for both humans and agents to use them.

Above all, onboard agents like you’d onboard a human. Give them context, connect them to your operational stack, and keep a human accountable for their outcomes.

Let me know what you think in the comments!

Such great and valuable actionable information and guidance! Thank you! :) More like this please

The Ramp and Factory examples are compelling - but they're companies that built the culture and structure alongside the tooling.

The harder version of this question is what happens in organisations that didn't start AI-native. Same L0-L3 ladder, but L3 systems builders are trying to encode governance into workflows that weren't designed for it.

"Automate your job" is clean advice when you own your job. It's harder when three teams share the process and nobody owns the outcome.