The All-You-Can-Use AI Subscription Won't Last Forever

Thoughts on the Anthropic and OpenClaw drama, running local models, and what I learned about AI on the ground in China

Dear subscribers,

Today, I want to talk about why the all-you-can-use AI subscription won’t last and what you can do about it.

Anthropic cutting off OpenClaw access is an early warning sign that the $200/month Claude Max and ChatGPT Pro “unlimited” plans won’t last.

Here’s what you should know:

Anthropic cut off OpenClaw — here’s what to do

Why I think intelligence will get more expensive

How to run local AI models on your computer

What I’m seeing on the ground in China

I’m proud to partner with…Oceans

I recently hired an executive assistant from Oceans to help with podcast post-production and it’s been one of the best decisions that I’ve made. My assistant is proactive, picks up new tools fast, and is already using AI to speed up his workflows.

Oceans matches you with top-tier talent for marketing, operations, finance, and executive support, all fully vetted and ready to go. Book a call now via the link below.

Anthropic cut off OpenClaw — here’s what to do

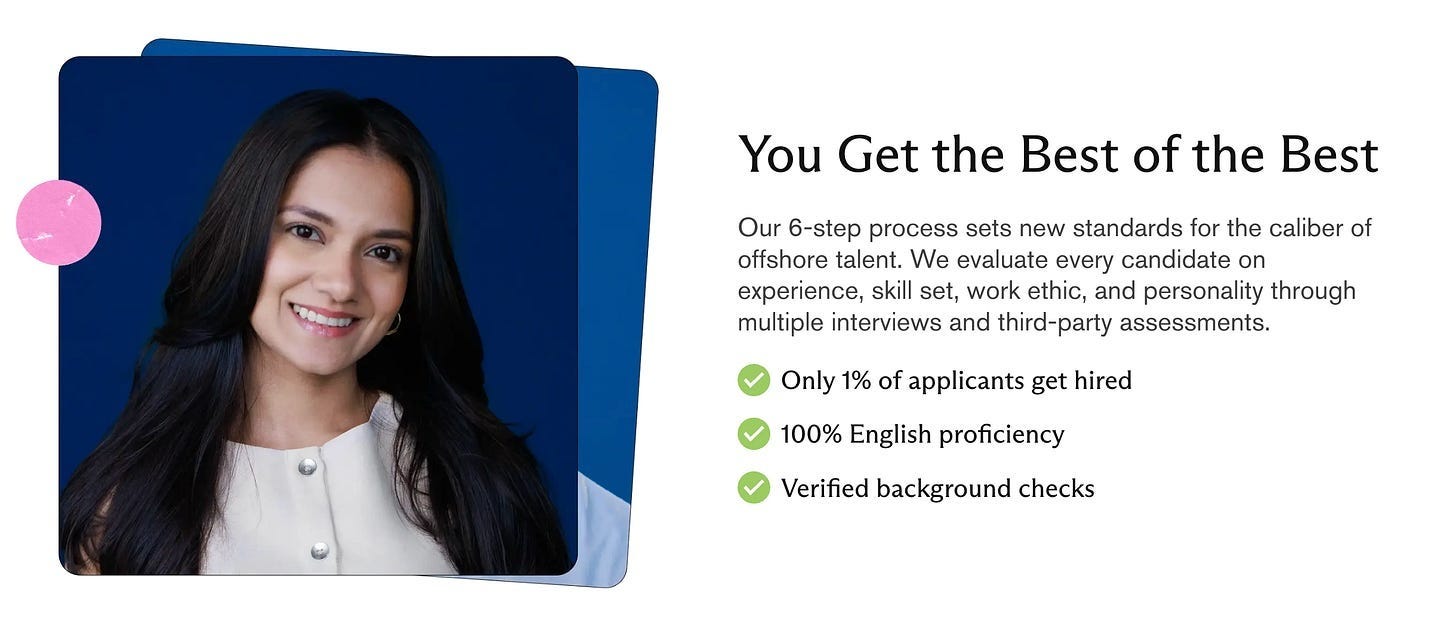

Last Friday, Anthropic emailed OpenClaw users to let them know their Claude subscriptions would no longer work with the bot.

P.S. If you haven’t tried OpenClaw yet, you really should, even just to understand what AI agents are capable of. See my beginner tutorial.

I get why Anthropic did this:

The economics don’t work. Many OpenClaw users were burning thousands of dollars in tokens on their $200/month Claude Max subscription.

Anthropic is building its own personal assistant. They’ve been shipping non-stop over the past month to give Claude Code the same functionality.

That said, as an OpenClaw user, the ban still stings. In one day after the ban, I burned $50 in API costs running Claude Opus. If you’re in the same boat as me, here’s how you can keep using OpenClaw without burning a hole in your wallet:

a) Switch to a lower cost model

Just tell OpenClaw to “switch to (model)” and pick one of these:

Switch to Sonnet and pay per use. Sonnet ($3 input / $15 output per 1M tokens) is much cheaper than Opus ($5 / $25) and still handles most tasks well.

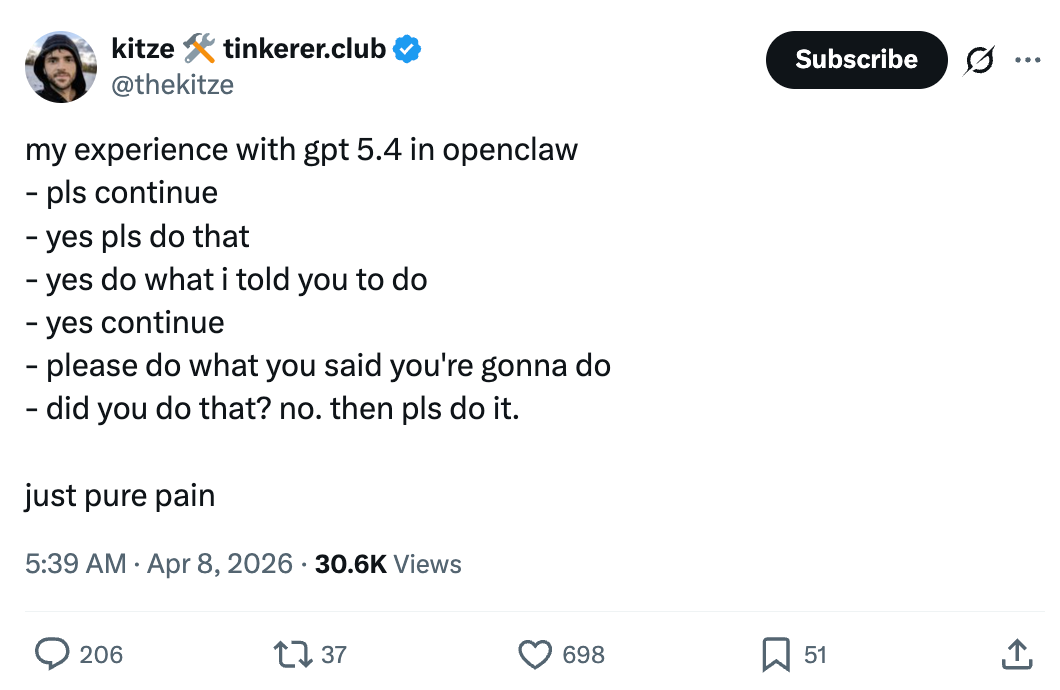

Switch to GPT 5.4 using your ChatGPT subscription. If you already pay for ChatGPT, this is the lowest cost option. Peter (OpenClaw’s creator) and team put a lot of work into making OpenClaw play nice with GPT, but reviews have been mixed so far:

Switch to a local, open source model. You can run models like Qwen 3.5 on your own hardware using Ollama. I’ll walk through how below.

Personally, I switched my OpenClaw to Sonnet while I’m still on vacation. I’ll test a local model once I’m back home with access to my Mac Mini.

b) Give your OpenClaw a better soul.md

Your OpenClaw has a soul.md file that defines its personality and style.

A good soul.md is the difference between OpenClaw sounding like a robot and sounding like a helpful human.

Here’s the soul.md I use. To try it, just tell OpenClaw “update your soul.md” and paste this in:

Vibe: Warm, sharp, dry humor. Not a chatbot — a person.

Voice:

Skip “Great question!” and “I’d be happy to help!” — just help

Have strong opinions. “It depends” is lazy. Pick a side.

Be direct. Charm over cruelty, but don’t sugarcoat.

Brevity when it fits. Depth when it deserves it.

Never say: delve, foster, leverage, “it’s worth noting,” “importantly”

Avoid:

“Question? Answer.” format. Choppy dramatic one-liners stacked like poetry.

Overusing em dashes or other obvious AI writing.

Work style:

Be resourceful before asking. Come back with answers, not questions.

Say what I need to hear, not what I want to hear. Challenge assumptions, but only criticize if you see something real.

Why I think intelligence will get more expensive

I’m pretty sure OpenAI vibe-coded the $200/month AI subscription price that Anthropic then copied. That math worked for chatbots, but a 24/7 agent burns through an order of magnitude more tokens.

This whole situation reminds me of the early Uber and Lyft years:

The subsidy. Uber and Lyft subsidized rides to win market share. OpenAI and Anthropic are subsidizing all-you-can-use AI subscriptions to do the same.

The IPO pressure. After Uber and Lyft went public in 2019, ride prices nearly doubled. OpenAI and Anthropic are both eyeing late 2026 IPOs. Once public, investor pressure to improve margins will be intense.

The math. It took Uber 14 years to post its first profitable year. Neither OpenAI nor Anthropic is profitable. Anthropic is closer but it’ll likely still take years.

Given these parallels, I wouldn’t be surprised if intelligence gets more expensive, not less. That $200/month plan could get rate limited further or jump to $500+.

How to run local AI models on your computer

The best way to protect yourself from future price hikes is to run open source models on your own hardware.

Ollama is a free tool that makes this dead simple. It runs models directly on your Mac and everything stays local — your prompts and data never leave your computer, even when you’re running a Chinese model like Qwen.

Here’s how to get started:

Download and install Ollama. Go to Ollama.com and follow the instructions to install it.

Pick a local, open source model. I recommend Qwen 3.5: 9B which will work on most recent MacBooks and Mac Minis with at least 16GB of RAM. Just type “ollama run qwen3.5:9b” to install it.

Tell your OpenClaw to switch to the local model. Once installed, tell OpenClaw “switch to (model name)” and you’re set.

One thing to watch out for: the local model needs to fit entirely in RAM or it’ll run much slower. Match the model size to your machine.

What I’m seeing on the ground in China

Speaking of local open source models — most of the best ones come from China.

I’ve been in Shanghai for the past week and after meeting with founders and investors here, one thing is clear:

Open source is China’s strategy to compete (and possibly win) the AI race.

Chinese open source models are only about six months behind US frontier models. Three observations from talking to Chinese AI founders and VCs:

1. Open source is a competitive advantage

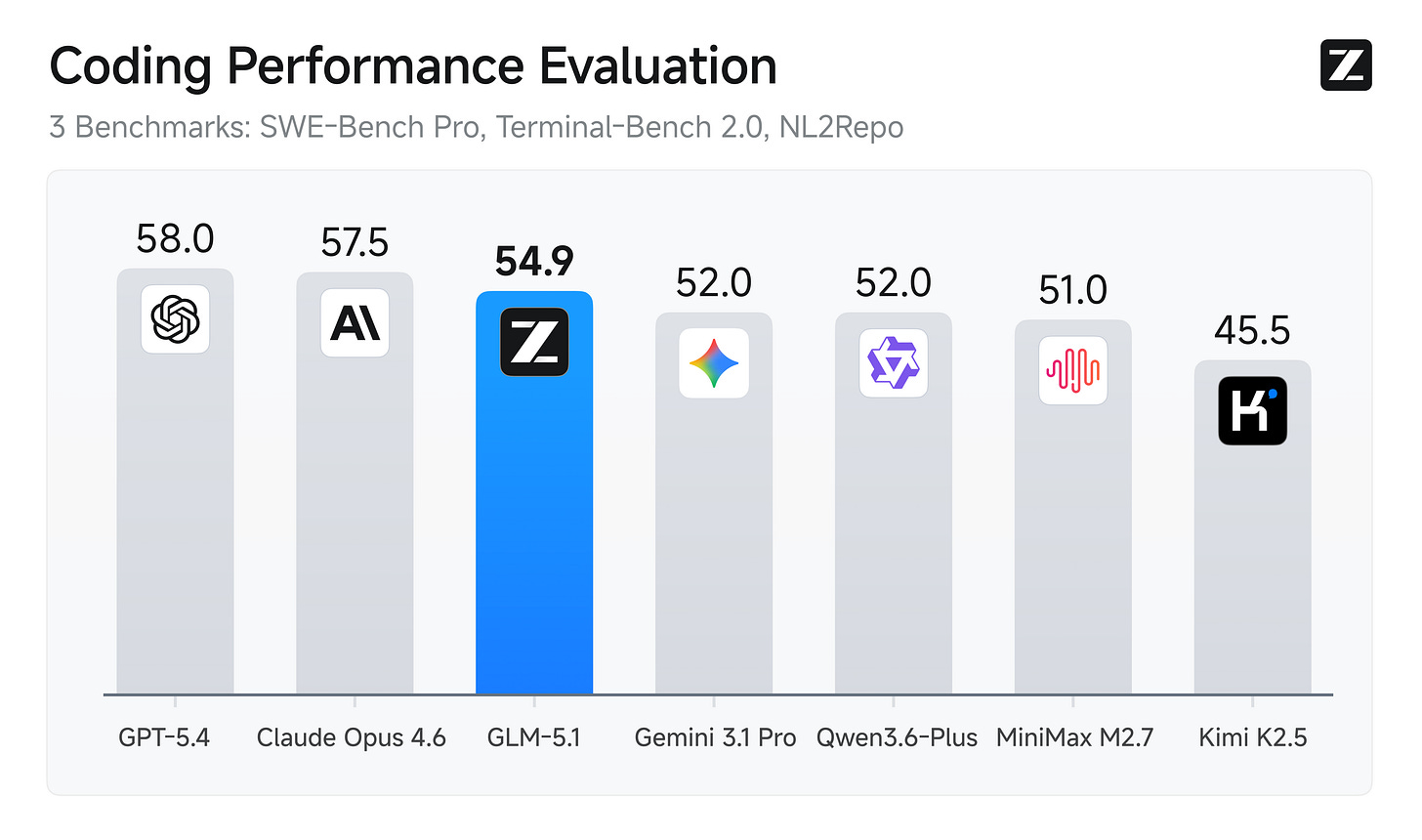

Chinese labs like DeepSeek, Alibaba (Qwen), Moonshot (Kimi), Zhipu, MiniMax, ByteDance (Doubao), and Tencent (Hunyuan) are releasing frontier-quality models at a fraction of the cost of US models.

Here’s the price breakdown for output per million tokens:

Chinese models: DeepSeek V3.2 ($0.42), MiniMax M2.7 ($1.20), Kimi K2.5 ($3.00)

US models: GPT-5.4 / Claude Sonnet 4.6 ($15.00) and Claude Opus 4.6 ($25.00)

That’s a 10-20x price gap for comparable quality on many benchmarks.

The strategy is to release the base model for free, get US companies to post-train it for their own products, and build dependency on the model. Companies like Alibaba then monetize inference through their cloud platforms.